After watching Ryan Jones’ session “What’s new in the Common Data Service”, I ask myself whether that’s the question or it should be when will it be natively available in the Common Data Service? Click here to continue reading Is Dataverse the future of Finance and Operations apps?

WARNING! THIS POST IS LONG OUTDATED AND VISUAL STUDIO 2022 IS THE DEFAULT IDE SINCE DYNAMICS 365 FINANCE AND OPERATIONS VERSION 10.0.40 AND THE VHD SINCE APRIL 2024 RELEASE. Tired of developing in Visual Studio 2015? You feel you’ve been left and forgotten in the past? Worry no more, you can use Visual Studio 2017/2019 to develop Microsoft Dynamics 365 for Finance & Operations! What are the advantages? Absolutely none at all! Visual Studio will…

You can read my complete guide on Microsoft Dynamics 365 for Finance & Operations and Azure DevOps. In the first part of this post I wrote about Azure DevOps value and how to set it up in MSDyn365FO. I want to start this second part with a little rant. As I said in the first part, those who have been working with AX for several years were used to not using version-control systems. MSDyn365FO has…

You can read my complete guide on Microsoft Dynamics 365 for Finance & Operations and Azure DevOps. One of the major changes we got with Dynamics 365 has been the mandatory use of a source control system. In older versions, we had MorphX VCS for AX 2009 and the option to use TFS in AX 2009 and AX 2012, but it wasn’t mandatory. Actually, always from my experience, I think most of the projects used no…

You can read my complete guide on Microsoft Dynamics 365 for Finance & Operations and Azure DevOps. It is possible that after queuing a new build, the job won’t start. It won’t be possible to cancel it either, and nothing will change after rebooting the build server VM. This can be an unusual case but it’s not something impossible. The build server Even though the build machine is exactly as a developer box it really…

There’s no mystery here but a misperception. Recently, a colleague found a little issue when using an AOT query to feed a view with a range dynamically filtered using a SysQueryRangeUtil method. Recreating the issue The query is pretty simple, only showing ledger transaction data from the GeneralJournalEntry and GeneralJournalAccountEntry tables. A range in the Ledger field from the current company was added as you can see in the pic below: We created a new range method by extending…

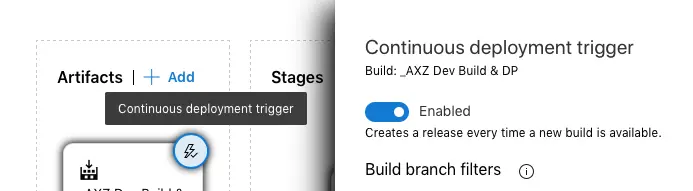

You can read my complete guide on Microsoft Dynamics 365 for Finance & Operations and Azure DevOps. Let’s go… Some weeks ago, the release pipeline extension for #MSDyn365FO was published in Azure DevOps Marketplace, taking us closer to the continuous integration scenario. While we wait for the official documentation we can check the notes on the announcement, and I’ve written a step by step guide to set it up on our projects. To configure the…