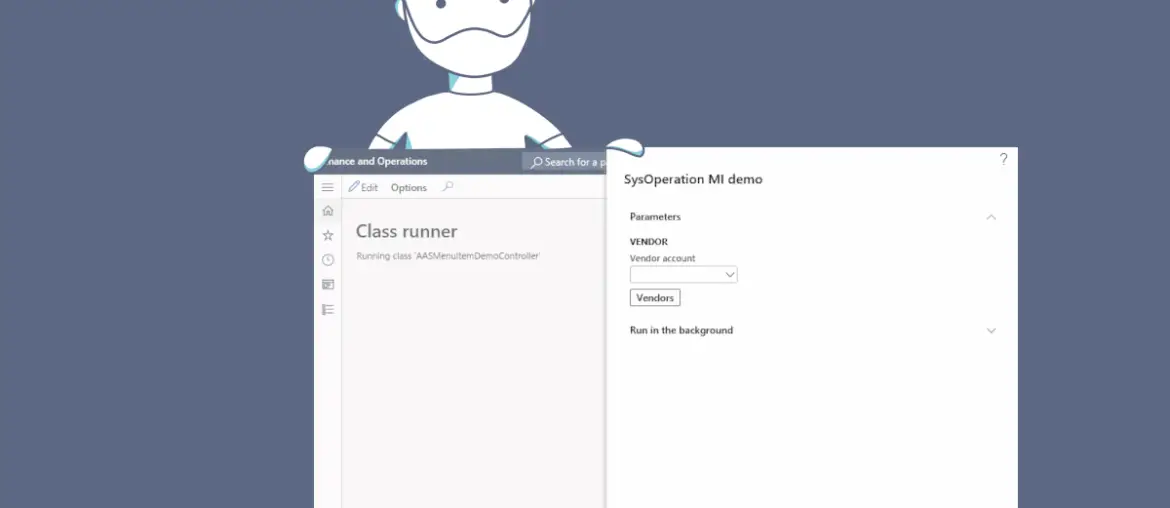

A short one! Some time ago I explained how to add a multi selection lookup to a SysOperation dialog and in this post I’ll explain how to add a Menu Item as a Function button to the SysOperation dialog.

Before the SysOperation Framework was introduced in AX2012, we used the RunBase Framework, and maybe doing these things looked easier/quickier with RunBase because all the logic was in a single class. But in the end what we need to do is practically the same but we have to do it in the UIBuilder class.

Let me show you and explain all the code. I’ll only show the DataContract and UIBuilder classes as they’re the only important ones in this case.